My research is focused on ubiquitous wireless sensing and imaging, and digital health perception using multimodal data (vision and audio). I combine signal processing, deep learning, and classical ML to build robust, data-driven systems that bridge advances in AI/ML with intelligent perception applications. I also have experience in inter-disciplinary and collaborative research.

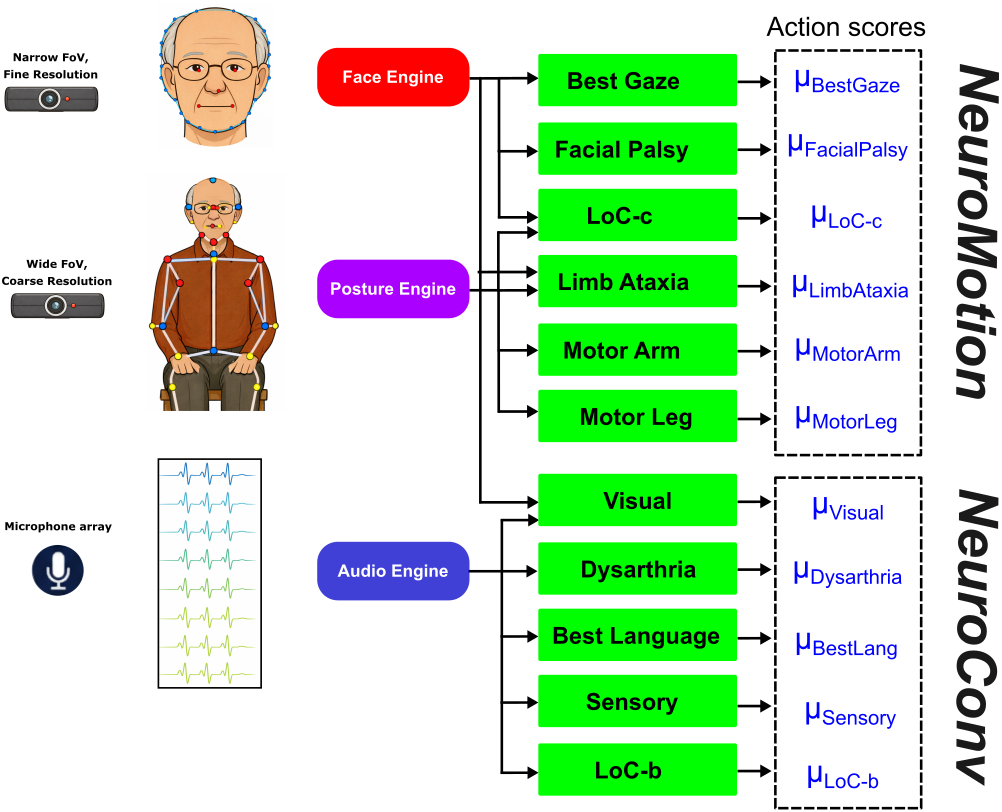

We collaborate with a team of neurologists to bring post-stroke recovery assessment to a multimodal (vision & audio) system. A synchronized multi-sensors pipeline was developed, and we captured standard stroke assessment trials -- facilitated by our collaborators from the neurology team -- from stroke survivors and healthy subjects. We then explored advances in signal processing, computer vision, audio processing, and Large Language Models (LLM) to emulate stroke assessment by human expert from camera and microphone data. The long-term objective is to support frequent, objective, and repeatable assessments without requiring specialized hardware setup. This allows an automated stroke assessment pipeline to be deployed in mobile health clinics or even in a home environment to cater to stroke survivors with extreme mobility impediments or living in remote areas without accessible care.

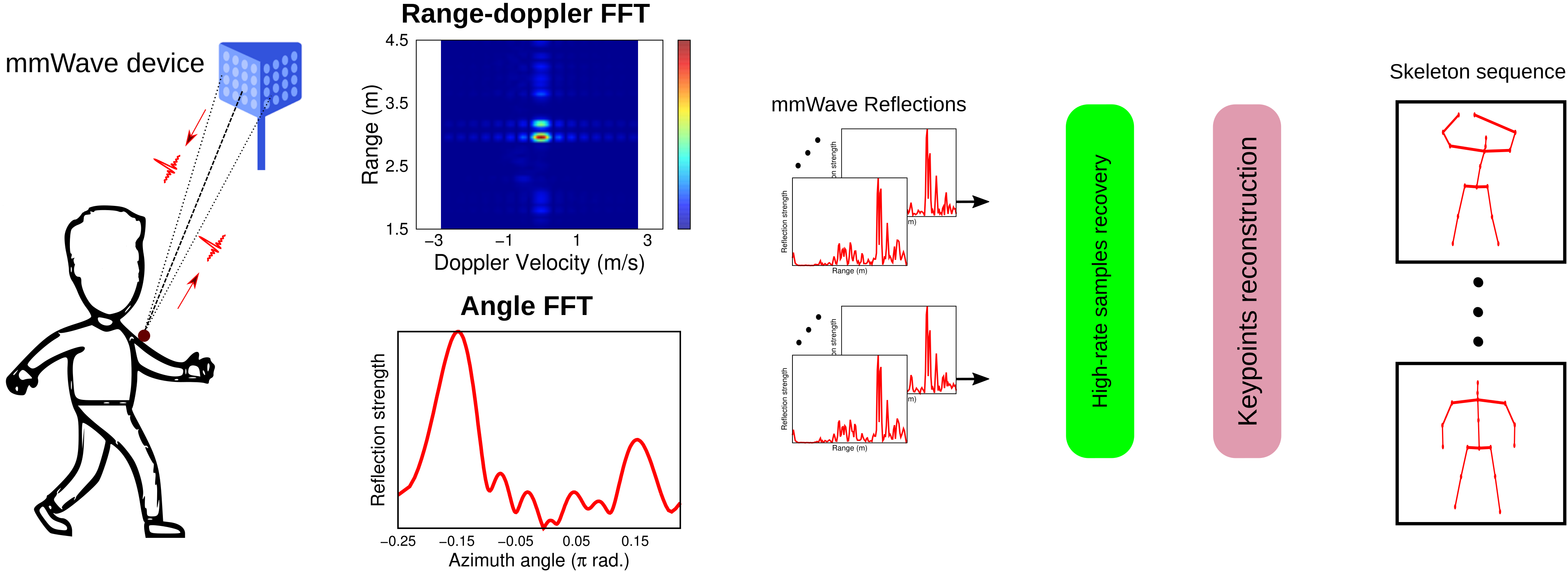

In this project, we investigate indoor human activity sensing from mmWave radio signals. MmWave is a core technology in 5G-and-beyond networking systems. Besides high throughput and low latency networking, mmWave band also allows much finer-grained sensing of human movement and pose. Such sensing is of extreme interest to researchers exploring contactless, indoor tracking of human activity for health rehabilitation and security. Wireless signals do not require proper visibility conditions which makes it suitable for continuous indoor monitoring. Moreover, radio reflections do not capture clear images of the human body or the indoor scene. This makes such a system more privacy-aware than camera-based ones. There are broadly two challenges. Firstly, existing networking infrastructure is not designed for concurrent networking and activity sensing. Secondly, wireless signals, especially high frequency mmWave signals, suffer from high information loss due to specular reflections as well as variable reflectivities of different surfaces (causing reflections from moderately reflecting surface to be overshadowed by ones from strong reflectors).

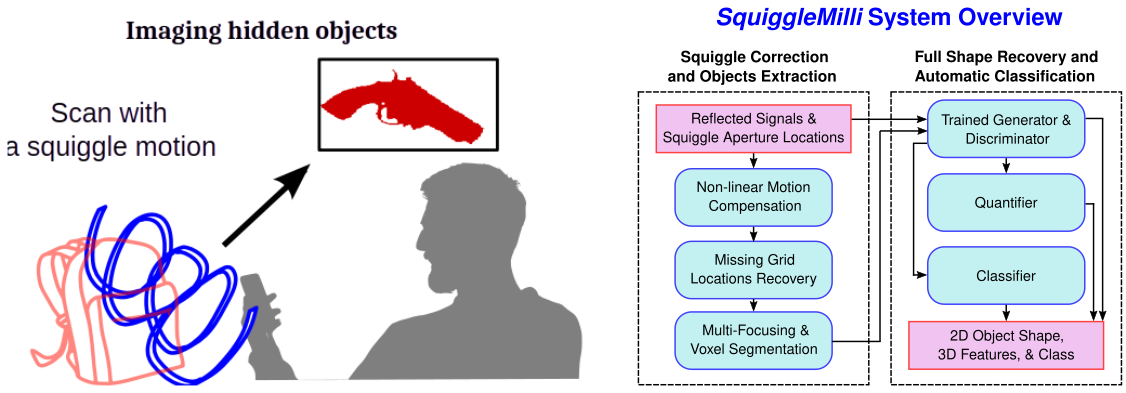

We tackle the problem of bringing imaging of hidden structures (e.g., objects under clothing or in low visibility) to handheld mmWave devices, rather than relying on specialized infrastructure. Today, this capability largely exists in bulky airport security scanners, which use millimeter-wave radio to probe through visual obstructions. Making this portable is hard for two key reasons: (1) freehand scanning motion is non-uniform and produces sparse, irregular measurements, and (2) mmWave reflections are highly specular, causing significant information loss and poor spatial detail in reconstructions. We address these challenges by combining motion compensation with compressed sensing to robustly reconstruct shapes from human, freehand scanning trajectories; using a cGAN-based super-resolution model to recover missing high-frequency details and sharpen the reconstructed images; and applying unsupervised clustering for background cancellation and separation of multiple objects in the scene. Finally, because real paired training data are difficult to collect at scale, we generate sufficient supervision using ray-tracing-based simulation to create synthetic datasets that enable effective learning and generalization.

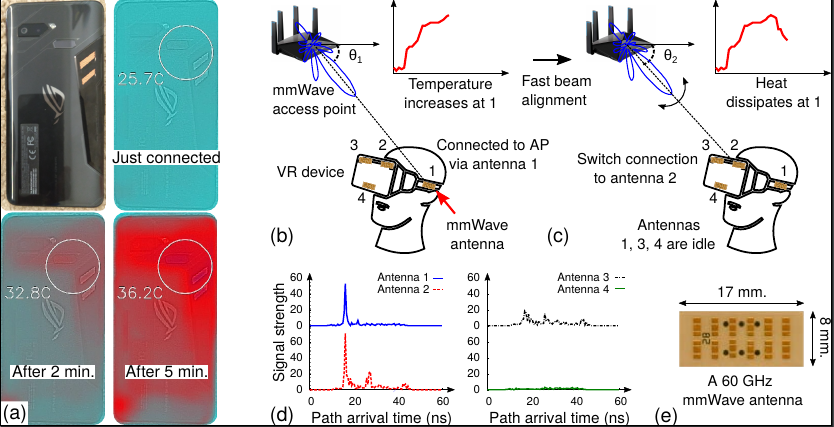

This work investigates a growing practical issue in millimeter-wave (mmWave) networking: while mmWave enables multi-Gbps, ultra-low-latency connectivity, operating at very high frequencies and wide bandwidths makes devices consume more energy, dissipate more power, and heat up quickly—often enough to hurt performance and user comfort. We first conduct a detailed thermal characterization of mmWave devices and find that heating happens rapidly: after just 10 seconds of data transfer at 1.9 Gbps, the antenna temperature can reach 68°C, which reduces throughput by 21%, increases throughput variability by 6×, and requires about 130 seconds to cool back down. Motivated by these measurement insights, we propose Aquilo, a temperature-aware multi-antenna network scheduler that actively manages transmissions to balance performance and thermal safety. Experiments on a real testbed in both static and mobile scenarios show that Aquilo keeps devices substantially cooler—achieving a median peak temperature within 0.5–2°C of the thermal optimum—while sacrificing less than 10% throughput.